From 12 Agents to 1

The mental model that prevents you from overengineering your next AI system.

It is 2026. People across the industry still mix up words like workflows, agents, tools, and multi-agent systems. Beyond terminology, this confusion has led to massively overengineered solutions.

Teams jump to multi-agent architectures because it sounds impressive and helps raise money. In reality, a simple workflow would have been faster to build, cheaper to run, and easier to debug. The result is bloated systems, wasted tokens, and debugging nightmares.

Our goal is to provide a clear mental model of what architecture to choose for your AI project: workflows vs. single agents vs. multi-agent systems.

Louis-François Bouchard from Towards AI has been working on this exact problem with his clients and distilled his decision framework into two YouTube videos: Stop Overengineering: Workflows vs AI Agents Explained and From Workflows to Multi-Agent Systems: How to Choose. He allowed me to take that framework and turn it into this article. Kudos to Louis-François!

This decision framework is a spectrum from simple to complex that tells you exactly what to build based on your actual constraints. The goal is to stay as far left on the complexity spectrum as possible while still solving your problem.

Here is what you will learn:

The fundamental difference between an agent and a workflow.

How to use the complexity spectrum to make architecture decisions.

When to rely on simple workflows for predictable tasks.

Why a single agent with tools is often enough for dynamic problems.

The exact breaking points that justify moving to a multi-agent system.

To apply this spectrum effectively, you must first define the terms. Here are the core misconceptions that lead to bad architecture decisions.

Before we continue, a quick word from the Decoding AI team. ↓

Go Deeper: Your Path to Agentic AI for Production

Most engineers know the theory behind agents, context engineering, and RAG. What they lack is the confidence to architect, evaluate, and deploy these systems in production. The Agentic AI Engineering course, built in partnership with Towards AI, closes that gap across 34 lessons (articles, videos, and a lot of code).

By the end, you will have gone from “I built a demo” to “I shipped a production-grade multi-agent system with evals, observability, and CI/CD.” Three portfolio projects, a certificate to back them up in interviews, and a Discord community with direct access to industry experts like Louis-François Bouchard and me.

Rated 4.9/5 ⭐️ by 300+ early students saying ”Every AI Engineer needs a course like this” and that is ”an excellent bridge from experimental LLM projects to real-world AI engineering.”

↓ Now, back to the article.

Clarifying the Confusion: Not Everything Is an Agent

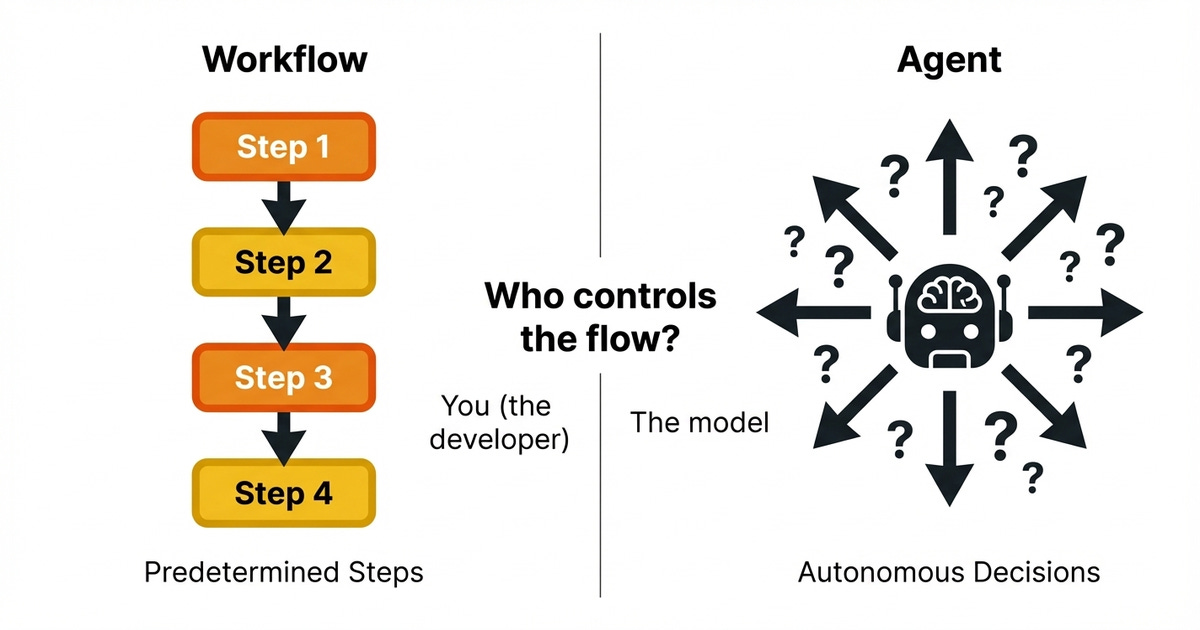

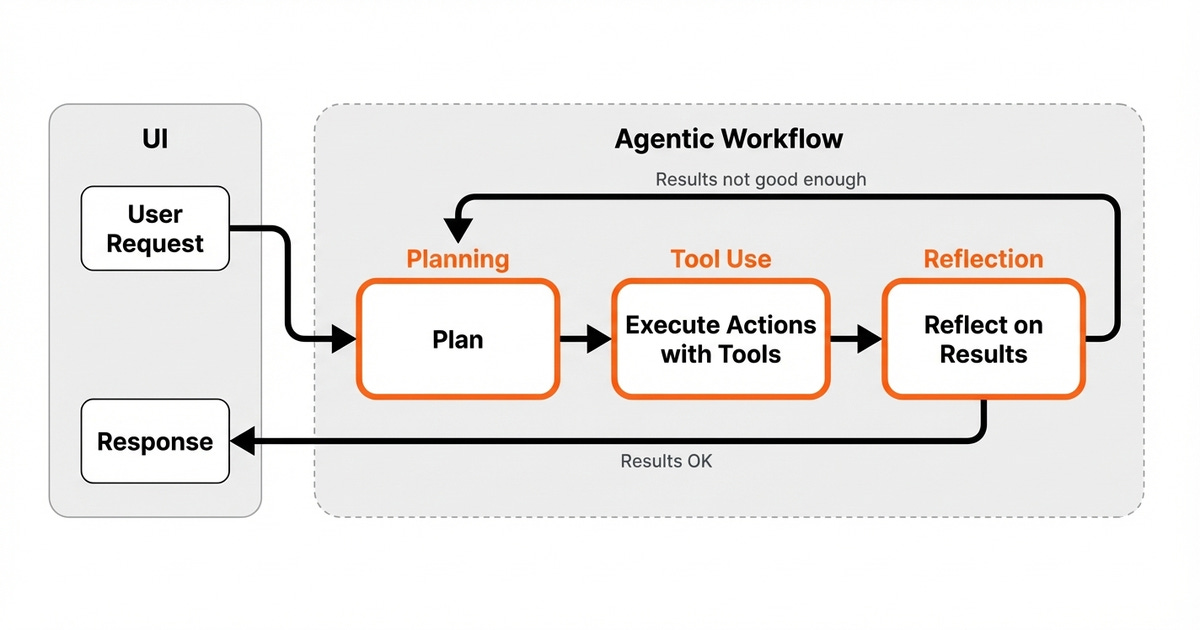

The first major misconception is that every LLM application is an agent. The key difference is autonomy. In a workflow, you control the flow.

You decide the steps and their order. In an agent, the model controls the flow. It decides what to do next based on the goal you give it.

If you can write down the exact sequence of steps in advance, you are building a workflow. You are not building an agent.

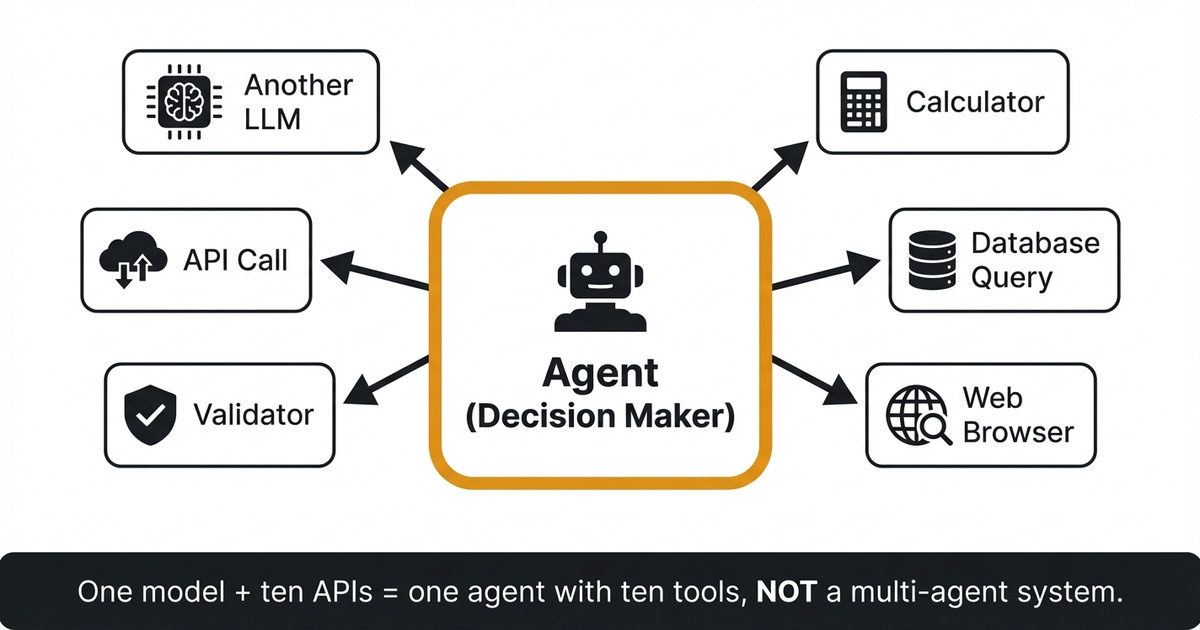

The second misconception is that tools are agents. A tool is a capability. It can be a calculator, a database query, a web browser, a validator, or an API call.

It can even be another LLM. An agent is the decision maker who chooses which tools to use and when.

If someone tells you they built a multi-agent system, but it is actually one model calling ten different APIs, that is not multi-agent. That is a single agent with ten tools.

This distinction matters. It defines how you architect, debug, and scale your system. It drives your core architecture choice between a workflow, a single agent with tools, or multiple agents.

The Complexity Spectrum: A Mental Model for Architecture Decisions

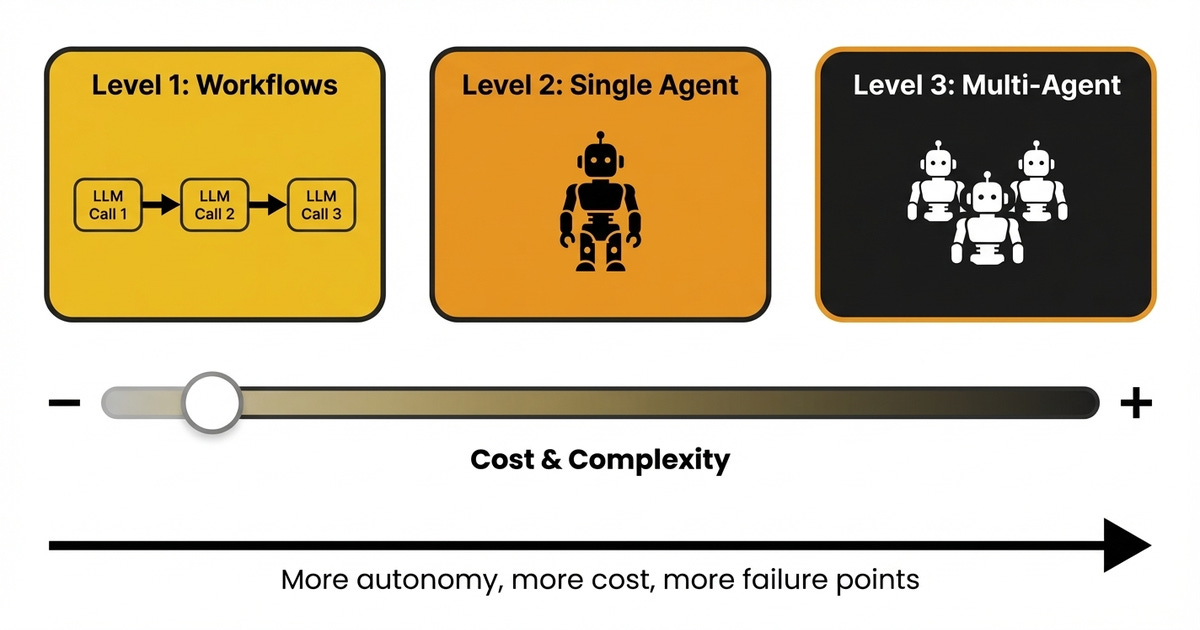

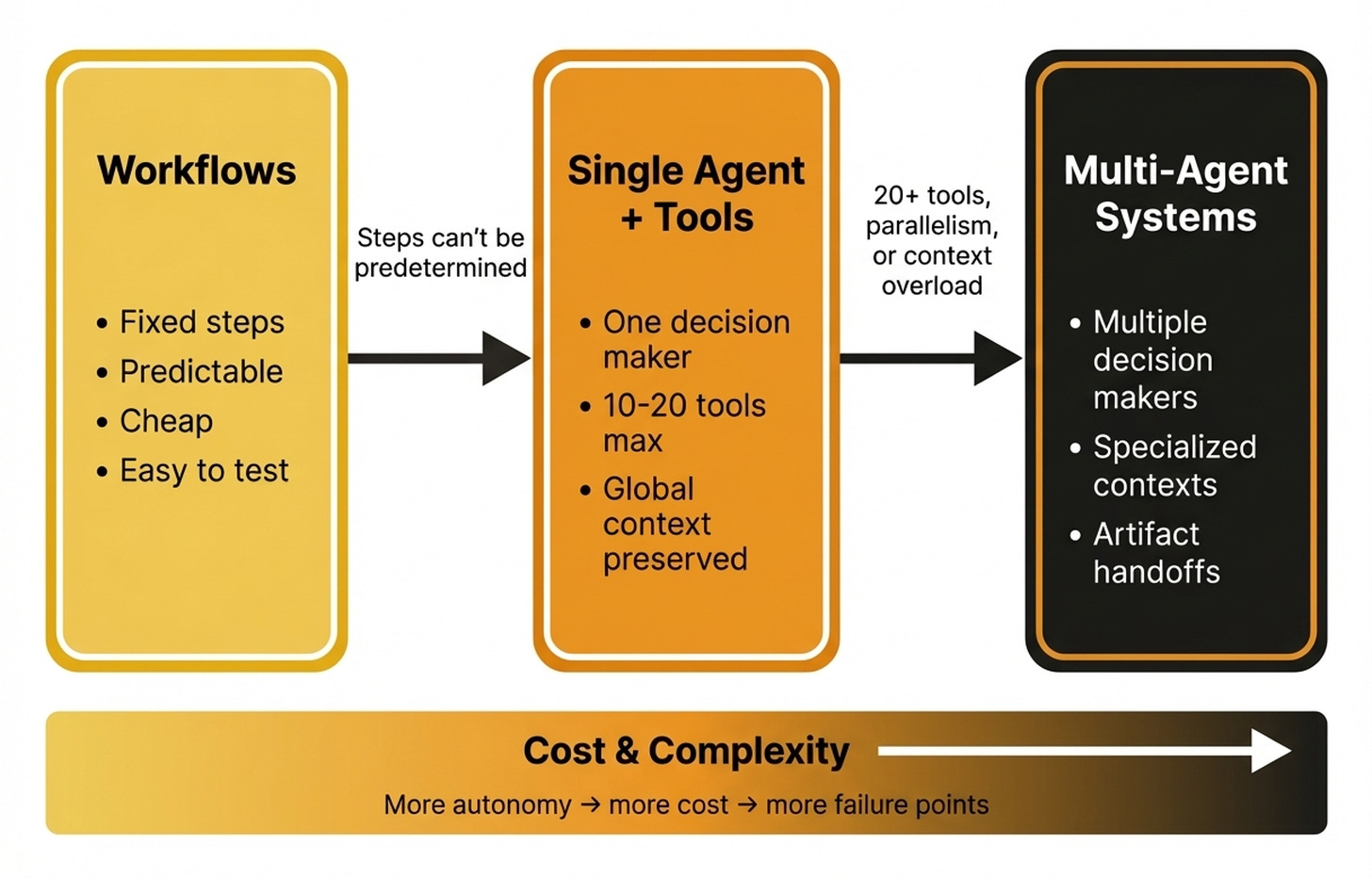

To make this architecture choice easier, we use a complexity spectrum. It is a slider going from the most control to the most autonomy. Your goal is to stay as far left as possible while still solving the problem.

Level 1 represents workflows. Here, you chain multiple LLM calls together in a predefined sequence. You control every step.

Level 2 represents a single agent with tools. The model makes decisions about what to do next. You have one decision maker and multiple capabilities.

Level 3 represents multi-agent systems. Here, you have multiple decision makers who need to coordinate with each other.

The core principle is straightforward. Move right on this spectrum only when you absolutely have to. Each step to the right increases costs, latency, and debugging complexity.

More LLM calls mean more tokens, more traces to follow, and more places where things can go wrong.

In practice, start simple and escalate only where things break. Write a prompt first. Test it.

Implement it with minimal complexity. Measure the results. Add what is missing.

If the model lacks information, add retrieval. If it needs calculations, add a tool. Only when you genuinely need autonomous decision-making should you reach for an agent.

Even then, start with one. The best AI systems are the simplest ones that reliably solve the problem. That usually means starting with workflows.

When a Workflow Is the Right Answer

Workflows are the right answer when your steps are known and stable. If the process is largely the same each time, regardless of input, a workflow is almost always the best choice.

Workflows win because they are predictable. They are easy to test because you can write unit tests for each step. They are easy to debug because you can trace exactly what happened when something goes wrong.

They are also cheap because you are not burning tokens on the model, figuring out what to do next.

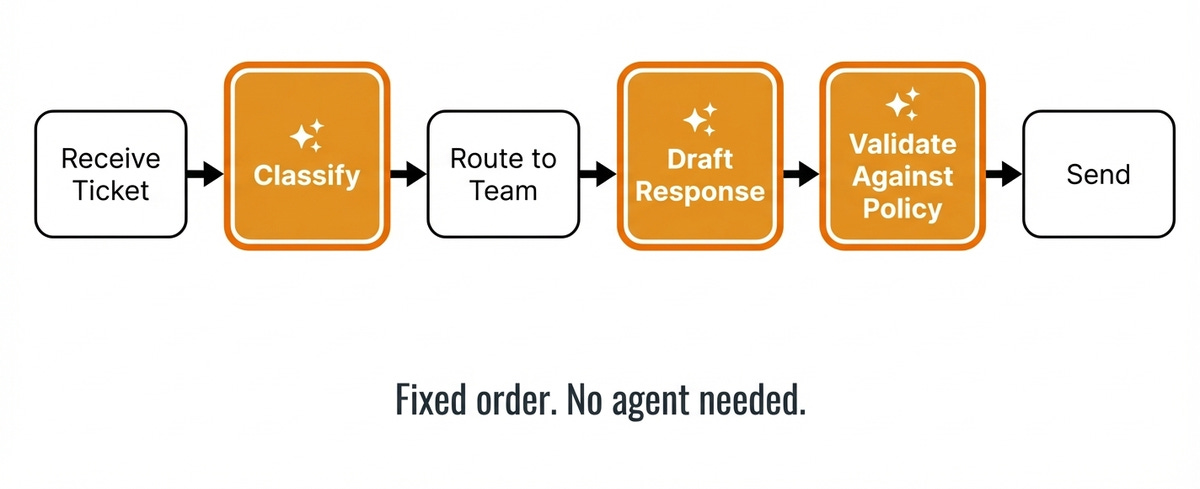

Consider a support ticket system. A ticket comes in. You classify it.

You route it to the right team. You draft a response from templates and context. You validate it against the policy.

Finally, you send it. Each step might involve an LLM call, but the model does not need to decide whether to classify before routing. That is always the order.

Building this as an agent adds overhead without adding capability.

Do not underestimate workflows. They are not limited to simple sequential chains. They can include routing to pick different models based on input.

They can use parallel execution with majority voting to aggregate answers. They can also use generator-evaluator loops where one LLM generates and another validates until quality criteria are met. They can even leverage tools in designs like the orchestrator-worker. These patterns handle complex tasks without any agent overhead.

If you can write down the exact sequence of steps in advance, like a recipe, it is a workflow.

When a Single Agent with Tools Wins

Sometimes the order of work is not fixed. You genuinely cannot write down the steps in advance. This happens when the path changes depending on what you discover along the way.

Maybe the first API call fails, and you need to try an alternative. Maybe the retrieved data is incomplete, and you need clarification. This is what agents handle well.

When is an agent worth the risk? Anthropic offers a useful framework. Agents make sense when the task is complex enough to need autonomous decisions and delivers real value.

Critically, the cost of errors and the cost of discovering those errors must be low. This is why AI coding agents are great. A human reviews the code before production, so mistakes are cheap to fix.

A purchasing agent who accidentally buys the wrong hardware makes an expensive error. You must match your architecture to your error tolerance [3].

The rule is to always start with one agent. A single agent with tools works best when tasks are tightly coupled and mostly sequential. It works well when global context matters, meaning step one affects step five.

It is also ideal when you need fewer than twenty tools and face strict budget or latency constraints.

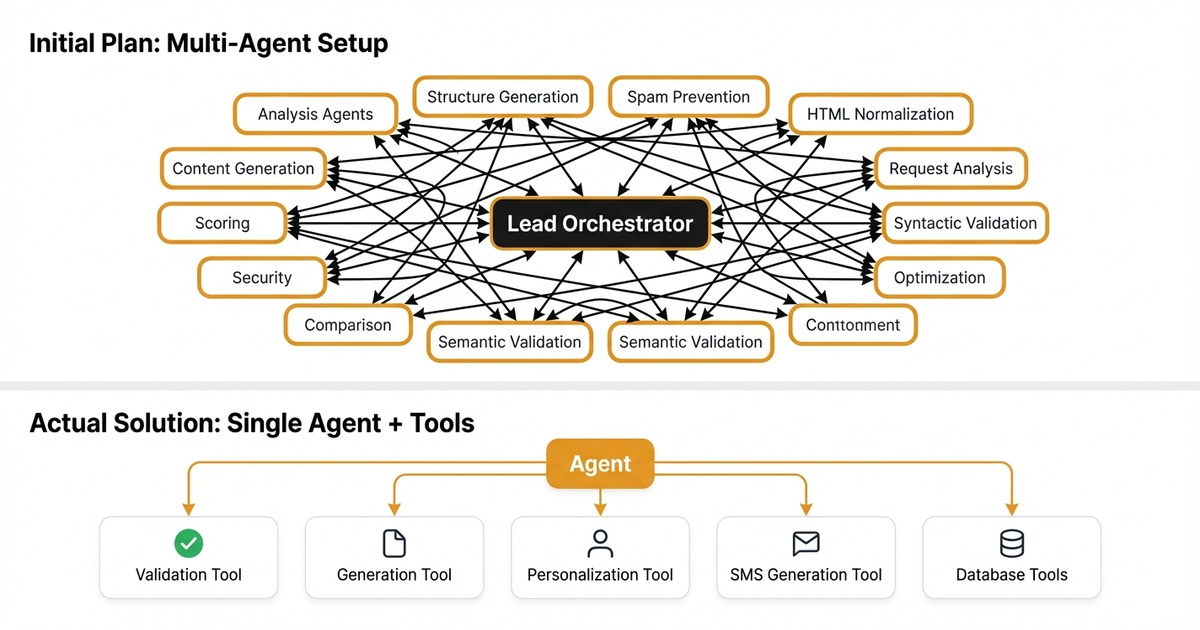

Take a marketing content platform from Louis-François’s client work at Towards AI. The client wanted AI-assisted content generation for emails, text messages, and promotional materials. Their initial specification called for a multi-agent setup with over a dozen specialized agents.

They wanted an orchestrator, a request analyzer, a content generator, and many others. On paper, it looked clean with specialists doing specialist work.

A single agent was the right call. The tasks were tightly coupled and sequential. The template choice affects the content.

Personalization depends on both content and contact data. Splitting this across multiple decision makers creates information silos and handoff errors. They did not need parallelism.

The flow was to plan, generate, validate, and fix if needed.

The key insight is that tools can be smart. A tool can have its own system prompt and use a different model. The validation tool can use its own LLM with instructions to catch errors.

The text message tool can treat character limits as deterministic engineering constraints instead of prompting problems. You get specialists, but you keep one brain to maintain context and make final decisions.

This results in a system that is faster to build, cheaper to run, and easier to debug. You get the same capabilities without the coordination overhead.

The Tool Count Problem: When One Agent Isn’t Enough

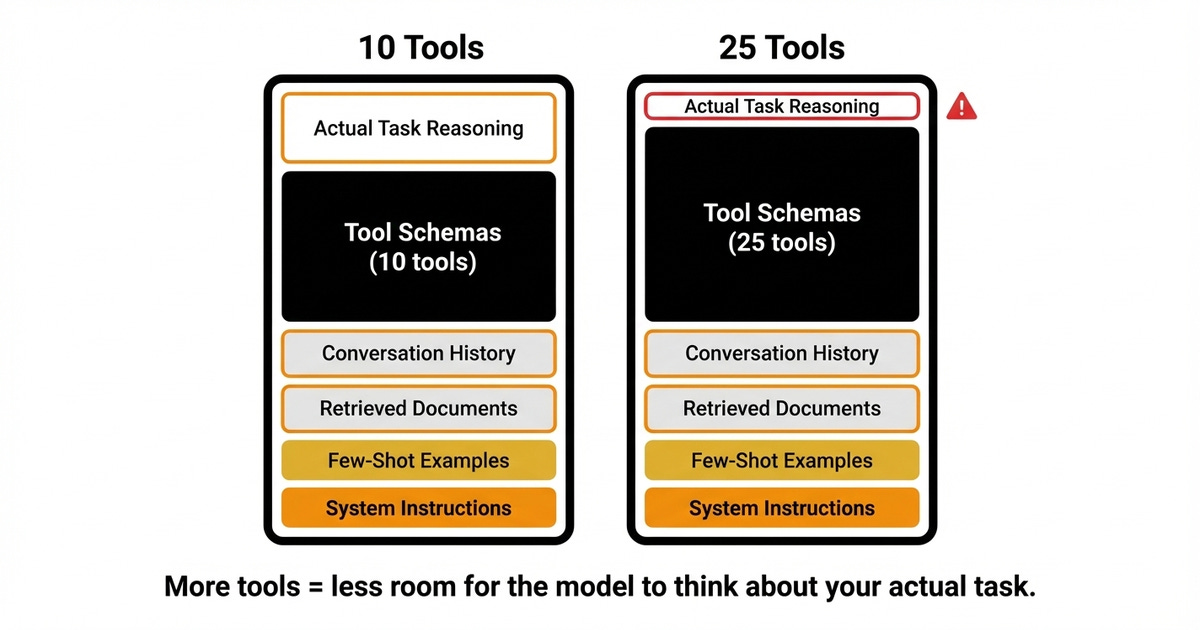

As your tool list grows, tool selection gets harder. This is one of the main ways agent systems quietly break down. It is also one of the clearest signals that splitting into multiple agents might be worth it.

Every tool has a name, description, and schema that the model needs in context to use correctly. The more tools you add, the more of your context budget you burn before the agent even starts thinking about the actual task. You also have to add system instructions, a few-shot examples, retrieved documents, and conversation history on top of that.

A single agent tends to work best with roughly 10 to 20 tools. Past that threshold, tool selection degrades. The agent has to choose among too many options in an already packed context.

This mechanism is known as context rot. LLM performance measurably degrades as context grows, well before hitting the advertised limit. Two forces drive this issue.

First, more context means more noise competing for the model’s attention. Second, models suffer from loss in the middle. They tend to attend more to the beginning and end of their context, underweighting information in the middle.

As your tool schemas and instructions pile up, the model gets worse at picking the right tool.

Managing context can reduce history and retrieved content, but not the tool schema load. Those definitions must always be there. The only approach that actually reduces how many tool definitions the model sees per call is splitting across agents.

If one agent sees only email tools and another only sees validation tools, each call stays smaller. Tool selection gets easier. Once you split tools across agents to keep calls small, you enter multi-agent territory.

When Multi-Agent Is Actually the Right Call

Specific reasons justify multiple agents, not because the architecture sounds impressive. There are four legitimate reasons to go multi-agent. First, you need true parallelism where tasks are genuinely independent and run simultaneously.

Second, you face context overload where instructions and tools degrade performance. Third, you need modularity to connect with third-party agent systems you do not control. Fourth, you have hard separation requirements like security boundaries or sensitive data handling.

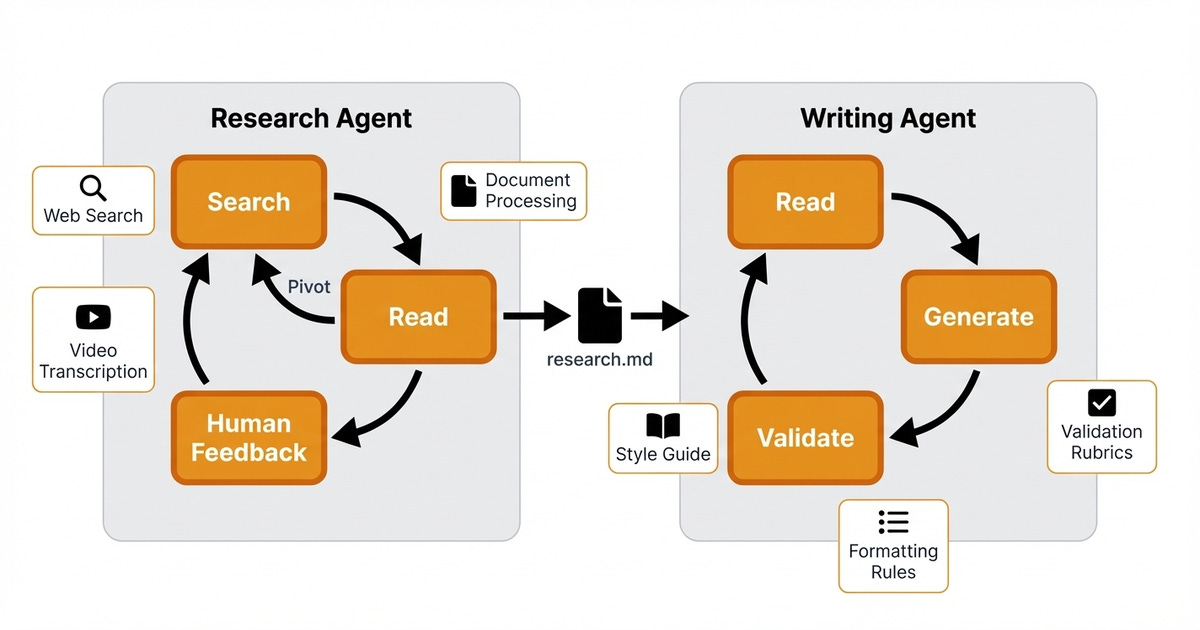

Consider the professional article generation system that Louis-François and I built as one of the projects for our Agentic AI Engineering course. We started with a single agent for research and writing but had to pivot because the two phases have fundamentally different needs.

The research phase is exploratory and dynamic. It needs flexibility and broad tool access across web search, video transcription, and document processing. The agent searches, reads, pivots based on what it finds, and iterates based on human feedback.

The writing phase is constrained and deterministic. It needs focused constraints, consistent style enforcement, and iterative refinement against fixed rubrics.

These agents communicate through explicit artifacts. The research agent produces a structured markdown file that the writer agent consumes as context. There is no complex runtime orchestration.

It is just a sequential handoff with a clear contract between them. Each agent has its own optimized context without the bloat of carrying the other’s tools.

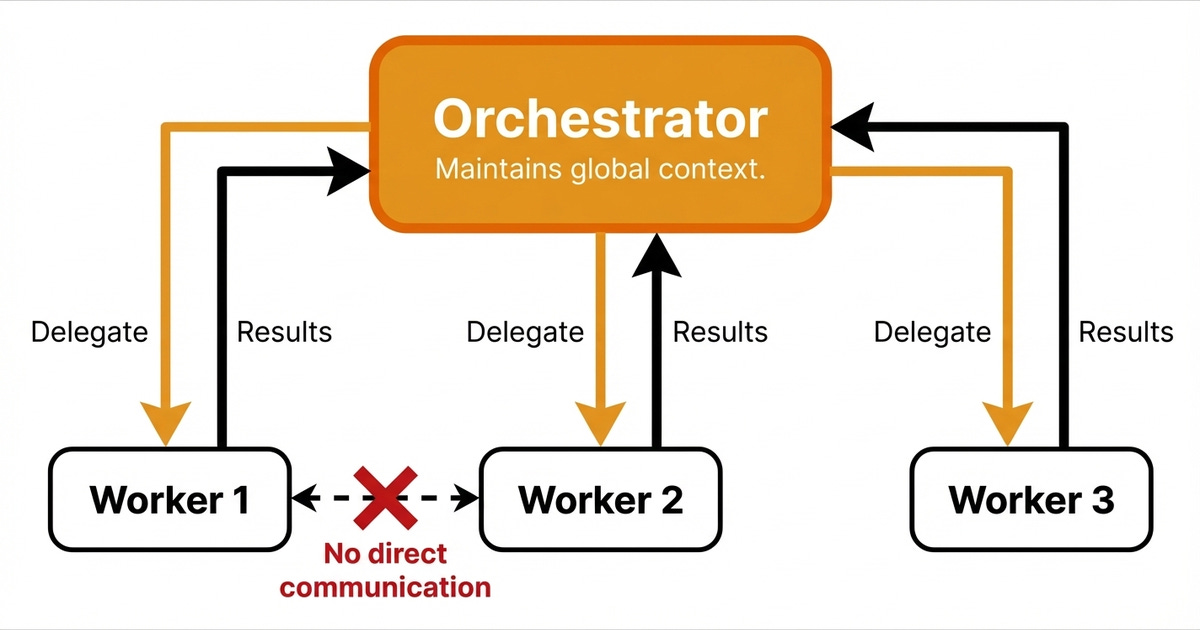

If you do go multi-agent, we recommend the plan-and-execute combined with the orchestrator-worker pattern. You do not want everyone talking to everyone. One orchestrator maintains the main context and delegates specific tasks to worker agents.

Then, it synthesizes the results. This prevents the information silos that kill multi-agent systems.

Multi-agent systems can simplify individual contexts and enable specialization. However, they increase coordination costs. You will face more token usage, added latency, more failure points, and handoff complexity.

Only accept those costs when you hit a real constraint that simpler architectures cannot solve.

To Wrap Up

To build reliable AI applications, you must stay as far left on the complexity spectrum as possible while still solving your problem.

Keep these key takeaways in mind:

Not every LLM application is an agent, and not every tool is an agent.

Always start with workflows because they are predictable, cheap, and testable.

Use one agent when the path cannot be predetermined, but keep the tool count manageable.

Move to multi-agent architectures only when you hit a real constraint like true parallelism or context overload.

Each step right on the spectrum increases cost, latency, and debugging complexity. The simplest system that reliably solves the problem is always the best system.

💡 If you want a step-by-step framework to help you decide what architecture to pick for your next project, Louis-François and the Towards AI team put together a free cheatsheet that walks you through the decision process from workflows to multi-agent systems.

What’s your opinion? Do you agree, disagree, or is there something I missed?

Enjoyed the article? The most sincere compliment is to share our work.

Go Deeper

Go from zero to production-grade AI agents with the Agentic AI Engineering self-paced course. Built in partnership with Towards AI.

Across 34 lessons (articles, videos, and a lot of code), you’ll design, build, evaluate, and deploy production-grade AI agents end to end. By the final lesson, you’ll have built a multi-agent system and a capstone project where you apply everything you’ve learned on your own.

Three portfolio projects and a certificate to showcase in interviews. Plus a Discord community where you have direct access to other industry experts and me.

Rated 4.9/5 ⭐️ by 300+ students saying “Every AI Engineer needs a course like this.”

Not ready to commit? We also prepared a free 6-day email course to reveal the 6 critical mistakes that silently destroy agentic systems. Get the free email course.

Images

If not otherwise stated, all images are created by the author.

awesome post. i fully agree about staying left. i’ve been guilty of over engineering systems too many times and have thoroughly learned this lesson. simplicity is king, make systems as simple as possible to achieve the goal. this applies with agents and llms.

This matches what I keep seeing from the PM side - teams pitch multi-agent because it sounds impressive in planning docs. Then debugging becomes a full-time job.

The decision framework here is solid. I would add one more filter before the workflow/agent split: can a human describe the success criteria in one sentence? If not, no amount of agent architecture will save you.