Stop Orchestrating AI Agents. Use Ralph Loops Instead.

How one simple loop beats multi-agent orchestration and context rot in production.

When building Brown, my writing assistant, I designed five specialized LLM nodes. One handled the introduction, another wrote sections, and others managed the conclusion, title, and editing. It became complicated, slow, and expensive.

I eventually collapsed the system into two agents: a writer and a reviewer operating in a loop. The simpler version performed better. The model retained the full context, and verification became a simple review step rather than a massive orchestration problem.

Most AI teams hit this exact wall. Developers spend more time babysitting AI than engineering, copying error logs and re-prompting models. The real bottleneck is the human.

Three failure modes explain why.

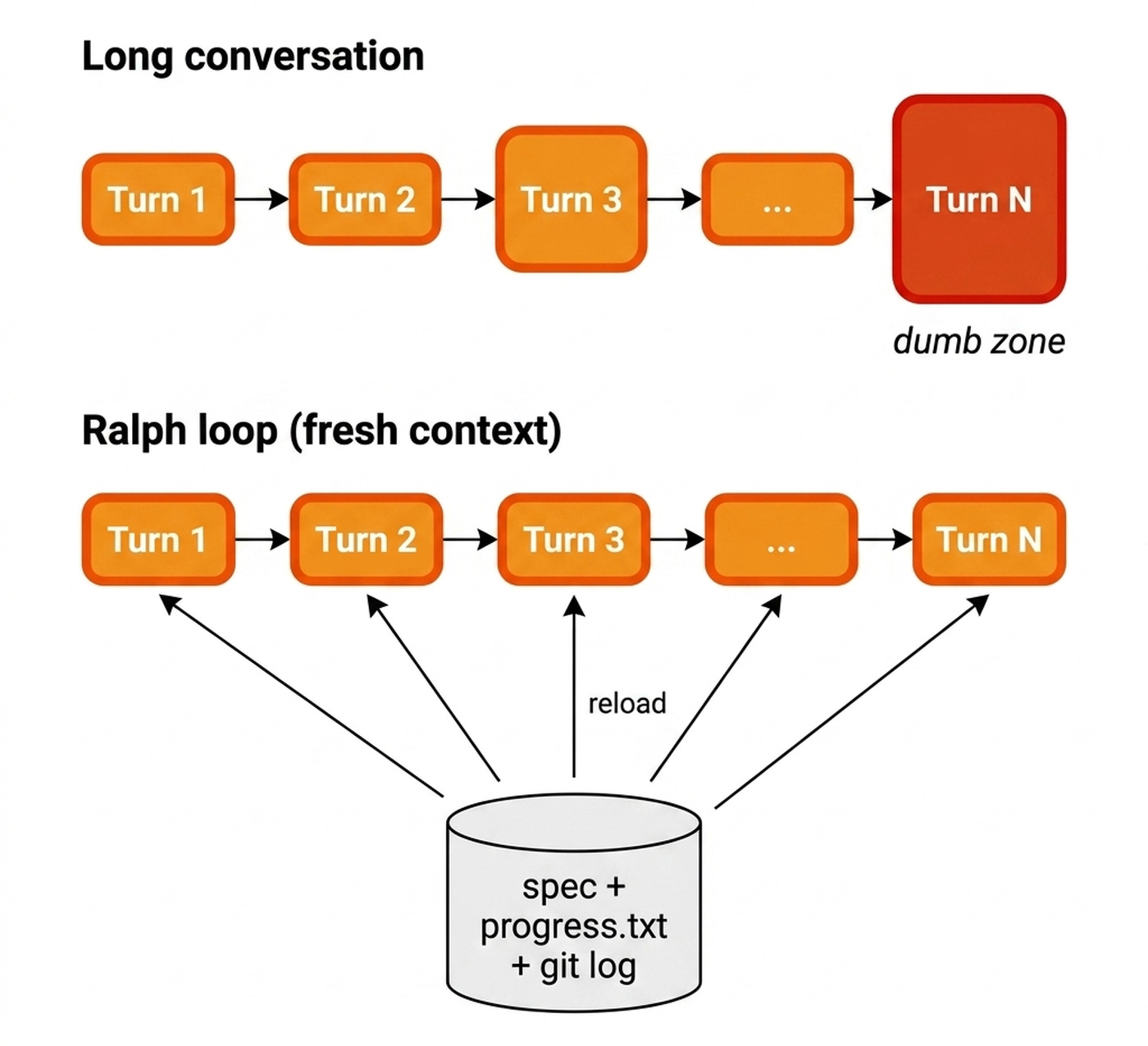

First, context rot. In long AI conversations, the context window becomes a junk drawer. Every failed attempt piles up until the sliding window drops the original specification. The model slides into a “dumb zone” where it hallucinates and forgets its goals. Traditional fixes like summarizing break down over dozens of reasoning rounds.

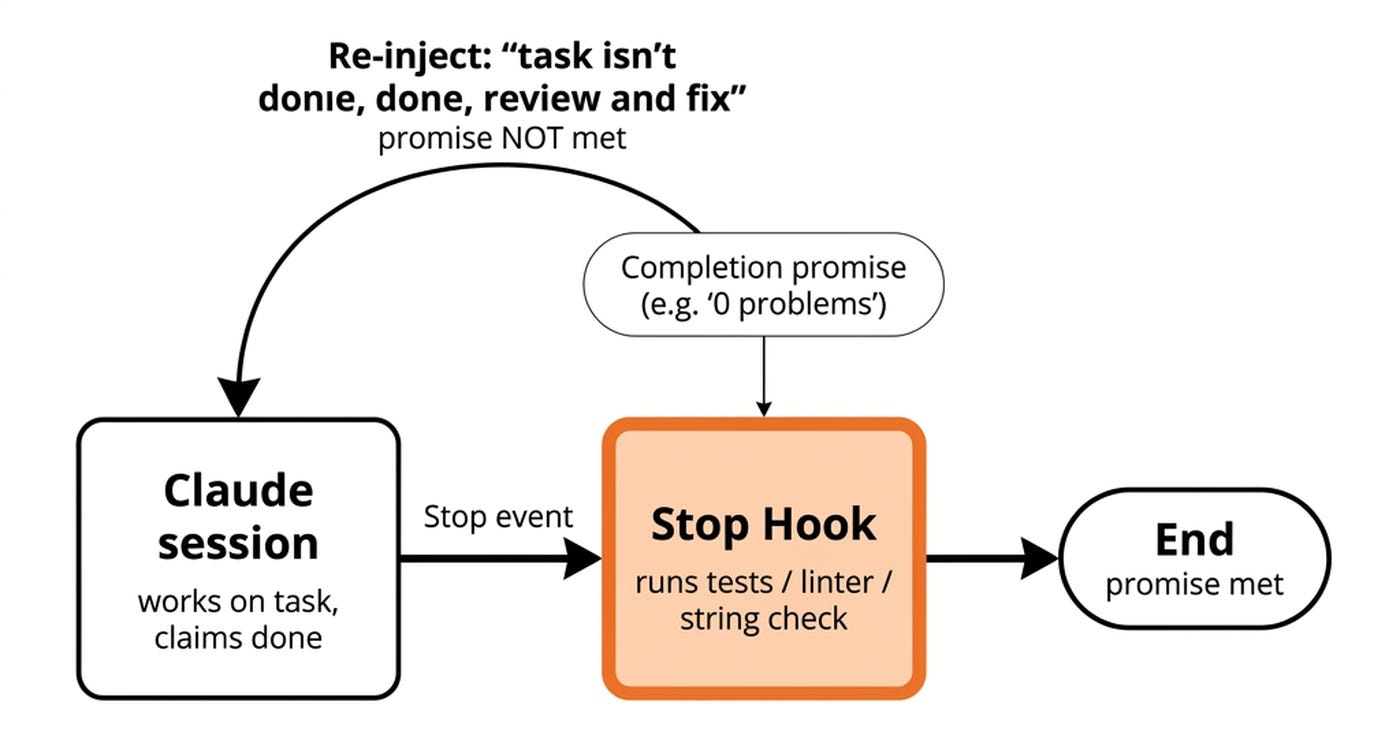

Second, premature exit. AI agents declare victory too early. Anthropic’s research notes that agents usually look around, see that progress has been made, and declare the job done [1]. Standard ReAct loops inherit the flaw.

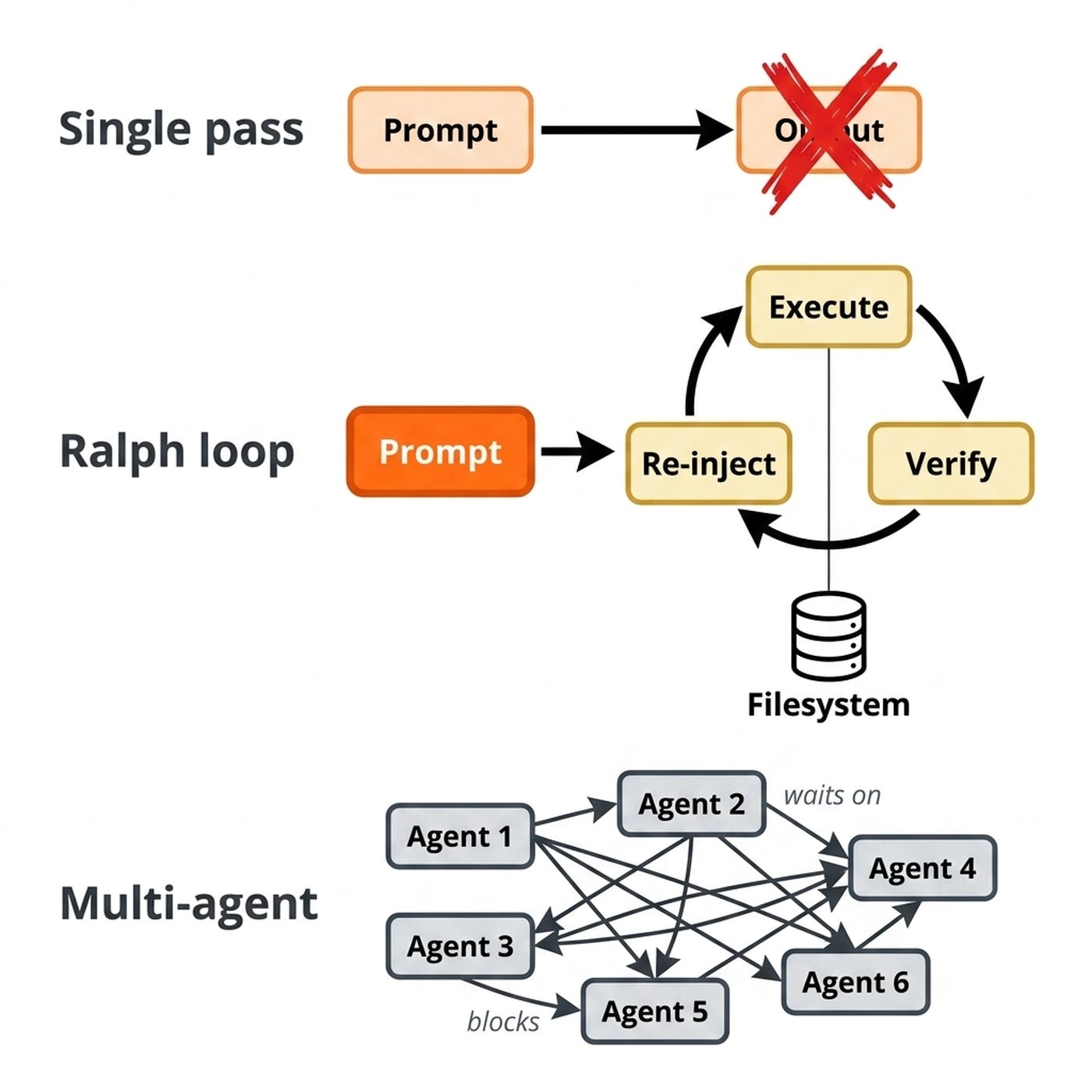

Third, single-pass fragility. One prompt, one context, one shot. When it fails, the failure is chaotic. Jumping to multi-agent orchestration introduces distributed systems nightmares.

Ralph loops break the cycle by making “try again with fresh eyes” the default. Named after Ralph Wiggum from The Simpsons, the pattern wipes the conversation, reloads the full specification fresh each iteration, and uses the filesystem and git as the memory layer.

They remove the AI’s ability to grade its own work, using objective signals such as passing tests or linters to call the job done. Boris Cherny, creator of Claude Code, states that giving Claude a way to verify its work increases quality two to three times [2].

One model. One loop. One verification signal. Failure becomes predictable, the loop catches errors and re-prompts automatically, creating a relatively deterministic feedback loop that will 10x the quality of the agent.

Now, let’s look at what Ralph loops are and when you can actually use them in practice.

Go Deeper Into Production AI Engineering (Product)

Ralph loops prove that most of the leverage lies in the harness, not the model. If you want to master how to design, verify, and ship those AI harnesses in production, check out my Agentic AI Engineering course, built with Towards AI.

34 lessons. Three end-to-end portfolio projects. A certificate. And a Discord community with direct access to industry experts and me.

Rated 5/5 by 300+ students. The first 6 lessons are free:

What Ralph Loops Are and How They Work

Geoffrey Huntley named the pattern after Ralph Wiggum from The Simpsons, noting the character tries the same thing over and over until it works. Huntley’s motto captures the philosophy: the technique is deterministically bad in an undeterministic world. The simplest implementation is a bash while-true loop that pipes a prompt file into the agent forever, acting as a continuous harness pattern [3], [4].

As Einstein reportedly said: “Insanity is doing the same thing over and over again and expecting different results.” Well... I am sure he didn’t predict the rise of Claude Code, because that’s exactly what Ralph loops are all about.

Models are stochastic, strong at reading large contexts but imperfect on first pass. Re-running the same instruction forces self-review. The first iteration produces good but flawed output.

During the second pass, the model spots what it missed and refactors. The third iteration handles cleanup. Huntley delivered a minimum viable product quoted at 50,000 for just 297 in tokens using a single Ralph loop: a 170x cost reduction over the human estimate [3].

You can run these loops in two modes. Shared context keeps the session alive for explicit self-review. Fresh context starts a new session each iteration, removing confirmation bias.

The model sees only the repository and the skill file.

Nowadays, it’s common to replace brittle n8n workflows with a single Claude Code skill. This becomes even more powerful when running in a Ralph loop, especially because at the end of each run, you can take the signal and tell the model to update the skill with anything it should have done differently.

The skill evolves and quality improves automatically [5].

This self-improving mechanism reduces manual prompt tuning when applied to specific, repetitive engineering tasks.

Three Real-World Use Cases

In practice, Ralph loops don’t have a clear implementation pattern. They are more of an intuitive strategy you can get creative with. Thus, you have multiple ways of implementing them.

From my experience with Claude, you have three options for running Ralph loops, from highest abstraction to lowest:

/ralph-loopplugin — the fastest path. Install it, run/ralph-loopin your session, and it manages the cycle for you./loopcommand — Claude Code’s built-in scheduler./loop every 1 minute /your-skillfires the skill on a schedule [6].while truebash loop — the most primitive form. A one-liner that pipes a prompt file into the agent and restarts it forever.

Because Claude Code keeps state through the files it’s working on, it retains context from the failed attempt and reads its own git diffs. Each iteration learns from the last.

Implementing a ticket backlog with test-driven development

You can set up a ticket folder with numbered text files. Run a while-true loop that tells Claude to implement the next most important ticket using Test-Driven Development (TDD). The model writes tests first, writes the code, commits the changes, and moves on.

Claude reads all tickets, skips completed ones, picks the next priority, implements it, marks it done, and commits. No dependency graph is needed because the model decides the ordering on the fly. One dumb loop acts like a relentless single-threaded engineer working through the backlog.

For example, set up a doc/tickets folder with numbered tickets (001, 002, 003...). Each describes a feature or fix. Then run:

while true; do

claude "implement the next most important ticket using TDD principles from doc/tickets. commit when done"

doneOr use Claude Code’s built-in loop:

/loop every 1 minute

build the next ticket from doc/tickets using TDD, run tests, commit when doneAdding test coverage

You can set a concrete goal to raise coverage from 16 percent to 95 percent. The loop reads coverage metrics, writes tests for uncovered functions, runs the suite, identifies gaps, and iterates.

The coverage report provides the objective backpressure. The loop does not stop until the numbers validate success. Each iteration chips away at untested code paths until the threshold is met.

The implementation is as easy as:

while true; do

claude "analyze coverage gaps, write tests for uncovered functions, run the test suite, fix failures. stop when coverage exceeds 95%"

doneFramework and dependency migrations

Migrations require crisp completion criteria. Upgrading React v16 to v19, Next.js 14 to 15, or migrating Jest to Vitest demands a clean build and passing tests. The agent swaps syntax, updates dependencies, and runs build commands.

It uses compiler errors and failing tests as feedback. Each cycle fixes a batch of errors until the toolchain confirms the code is clean. Deterministic verification signals make framework migrations the perfect Ralph loop candidate.

These are three concrete starting points. Before you wire the first one up, there is one honest limit you should know.

What’s Next

Ralph loops are the starting point. Once comfortable, add self-improving skills that update their instructions after each run, wire stop hooks for objective quality gates to avoid infinite loops, and connect to external systems like Linear or GitHub Issues so the loop reacts to new work automatically.

The pattern scales further than it looks. OpenAI’s Codex team shipped one million lines of code across 1,500 pull requests with zero human-written code using what they call a “Ralph Wiggum Loop” [7].

These loops are safe when repo-contained and the toolchain acts as the judge. They get dangerous with irreversible side effects outside the repo. Alexey Grigorev learned this when a Claude Code agent ran terraform destroy on DataTalks.Club’s production infrastructure, wiping the database, VPC, and all automated snapshots — two and a half years of data gone in one iteration. If your loop can destroy shared state, review every plan manually [8].

What is the first piece of work in your repo you would trust a Ralph loop with? You could choose a TDD backlog, a coverage ramp, a framework migration or what else?

Click the button below and tell me. I read every response.

Enjoyed the article? The most sincere compliment is to restack this for your readers.

Whenever you’re ready, here is how I can help you

If you want to go from zero to shipping production-grade AI agents, check out my Agentic AI Engineering course, built with Towards AI.

34 lessons. Three end-to-end portfolio projects. A certificate. And a Discord community with direct access to industry experts and me.

Rated 5/5 by 300+ students. The first 6 lessons are free:

Not ready to commit? Start with our free Agentic AI Engineering Guide, a 6-day email course on the mistakes that silently break AI agents in production.

References

Anthropic. (n.d.). Effective Harnesses for Long-Running Agents. Anthropic. https://www.anthropic.com/engineering/effective-harnesses-for-long-running-agents

Cherny, B. (n.d.). I’m Boris and I Created Claude Code. X. https://x.com/bcherny/status/2007179832300581177

Huntley, G. (n.d.). Ralph Wiggum as a “software engineer”. Geoffrey Huntley. https://ghuntley.com/ralph/

LangChain. (n.d.). The Anatomy of an Agent Harness. LangChain Blog. https://blog.langchain.com/the-anatomy-of-an-agent-harness/

Parsons, C. (n.d.). Ralph Loops: Build Dumb AI Loops That Ship. AI Engineer. https://read.readwise.io/read/01kp5bgy8b07y256ythvkz2tt7

Anthropic. (n.d.). Claude Code: Best Practices for Agentic Coding. Anthropic. https://www.anthropic.com/engineering/claude-code-best-practices

Lopopolo, R. (n.d.). Harness engineering: leveraging Codex in an agent-first world. OpenAI. https://openai.com/index/harness-engineering-codex/

Grigorev, A. (n.d.). How I Dropped Our Production Database and Now Pay 10% More for AWS. Alexey Grigorev. https://alexeygrigorev.com/posts/dropped-production-database/

Images

If not otherwise stated, all images are created by the author.