Our LLM Judge Passed Everything. It Was Wrong.

Align your evaluator with human judgment, or don't trust it at all.

Welcome to the AI Evals & Observability series: A 7-part journey from shipping AI apps to systematically improving them. Made by busy people. For busy people.

🧐 Everyone says you need AI evals. Few explain how to actually build them and answer questions such as…

How do we avoid creating evals that waste our time and resources? How do we build datasets and design evaluators that matter? How do we adapt them for RAG? ...and most importantly, how do we stop “vibe checking” and leverage evals to actually track and optimize our app?

This 7-article series breaks it all down from first principles:

How to Evaluate the Evaluator ← You are here

By the end, you’ll know how to integrate AI evals that actually track and improve the performance of your AI product. No vibe checking required!

Let’s get started.

How to Evaluate the Evaluator

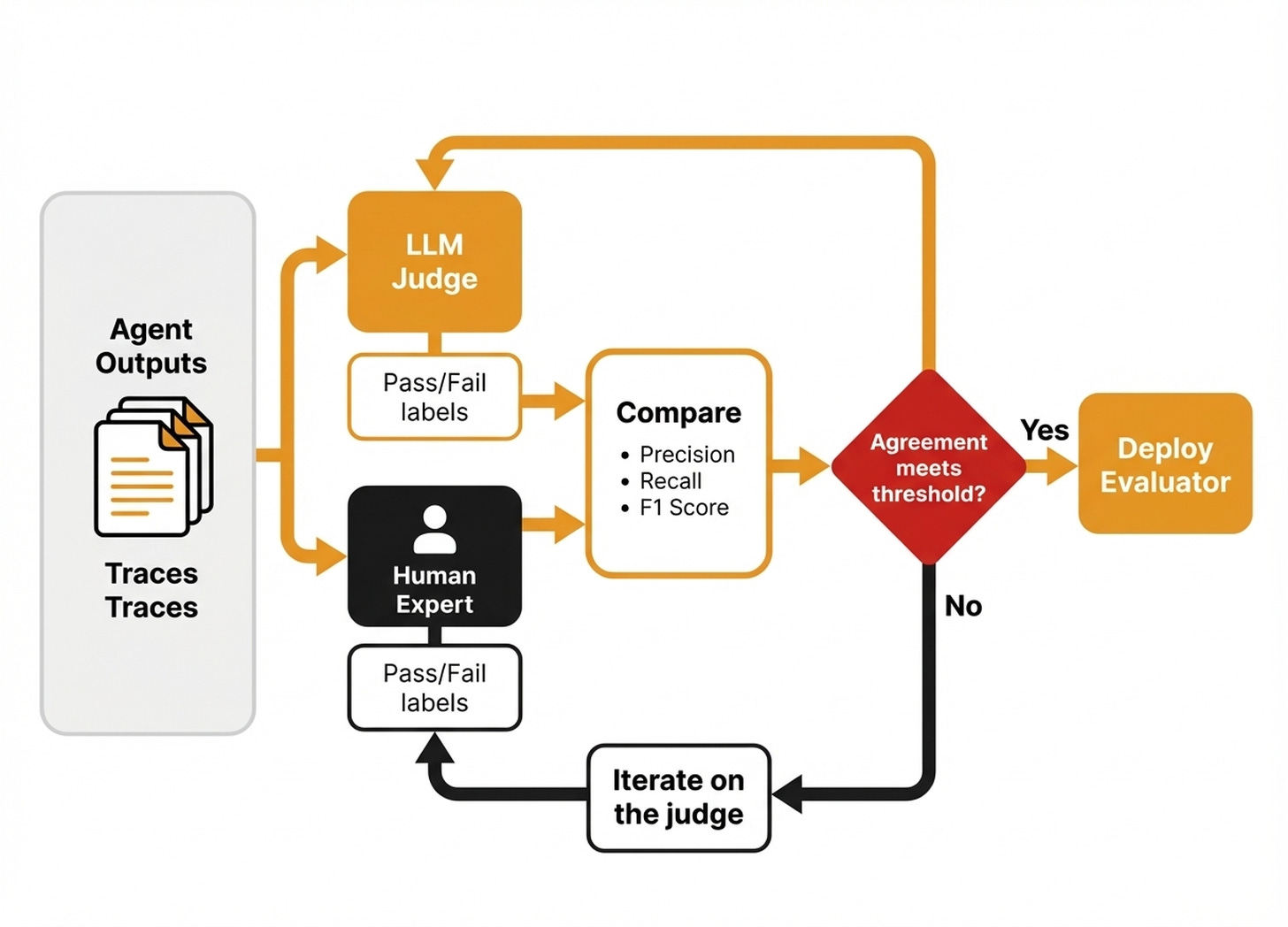

Your evaluators are running. They produce Pass or Fail verdicts on your agent’s outputs. But one open question remains: how do you know if those verdicts are correct?

While building Brown, a writer agent I developed with the Towards AI team for our Agentic AI Engineering course, I set up an LLM judge to verify generated articles. I wanted to check the expected structure, idea flow, and content against a golden dataset. I ran it on a batch of traces, and the scores seemed reasonable. Then I manually compared the traces against the judge’s verdicts, only to realize it was fixating on the wrong things.

It scored 0 when an article used bullet points instead of H3 headers, which was perfectly acceptable for that section. It scored 0 when the agent used a different transition phrase than the few-shot examples, penalizing creativity when we wanted flexibility. Furthermore, it scored 1 when paragraphs did not flow smoothly into each other, completely overlooking a real quality issue we cared about.

We had to iterate on the judge until it reflected what we actually valued. Anthropic reports a similar pattern, seeing eval scores jump from 42% to 95% after fixing grading bugs and ambiguous task specifications [3]. The agent was fine all along, but the evaluator was broken. That experience crystallized something for me: eval metrics you cannot trust are worse than no metrics at all.

Unvalidated evals create false confidence. You see green dashboards, assume quality is fine, and stop looking. You push broken outputs because the numbers said they were good, and you hear about problems from frustrated users instead of your test suite. Worst of all, you cannot tell which evaluations are wrong, as the 10-20% of incorrect signals hide silently and contaminate every decision built on those scores.

Your evaluator is another AI model that makes binary predictions, so it needs a test set, metrics, and mapped failure modes like any other model.

Also, LLM judges are inherently non-deterministic, meaning they hallucinate, carry biases, and drift. Alignment with human evaluators varies widely by task, with some teams achieving high agreement after careful iteration, while others struggle to break 70% on subjective criteria. The gap between your judge and reality could mean hundreds of bad signals across a thousand evaluations, which you will not know without validation [2].

Here is what you will learn to solve this problem:

Partitioning your labeled data to prevent data leakage.

Quantifying agreement using standard classification metrics.

Systematically closing the gap between your judge and domain experts.

Dealing with the randomness of LLMs.

To start this process, we first need to structure our dataset correctly.

But before digging into the article, a quick word from our sponsor, Opik. ↓

Opik: Automated Agent Optimization Using Your Data (Sponsored)

This AI Evals & Observability series is brought to you by Opik, the LLMOps open-source platform used by Uber, Netflix, Etsy, and more.

We use Opik daily across our courses and AI products. Not just for observability, but now to automatically optimize our agents’ prompts using the same datasets and metrics we already have in the platform.

You are learning how to build diverse synthetic datasets to evaluate your AI app. But once you have those datasets and metrics, why stop at measuring quality? Opik’s agent optimizer closes the loop. It uses your eval dataset to automatically improve your prompts. Here is why we love it:

Same datasets, zero extra setup — Opik’s optimizer reuses the exact datasets, metrics, and tracing you already have. Quick start guide.

Six optimization algorithms — Choose from strategies like HRPO (our favorite), which performs root-cause analysis on failures and proposes targeted fixes, or evolutionary optimization to explore diverse prompt structures. See all algorithms.

No-code Optimization Studio — For quick iterations, run optimization directly from the Optimization Studio UI. Start from your prompt, pick your dataset, choose an algorithm, and watch Opik test prompt variations against your metrics in real time.

Opik is fully open source and integrates with OpenAI, Anthropic, Gemini, and 100+ providers. Start optimizing your agents for free:

↓ Now, let’s move back to the article.

Structuring Your Data for Validation

You already have your ground truth. As explained in Article 2 and Article 3 of the series, your domain expert labeled each trace as Pass or Fail with a critique. Those labels are the reference standard your automated judge must match. If the task is highly subjective, consider having multiple people label the same examples to discover the agreement ceiling, but for most teams, the single expert is sufficient.

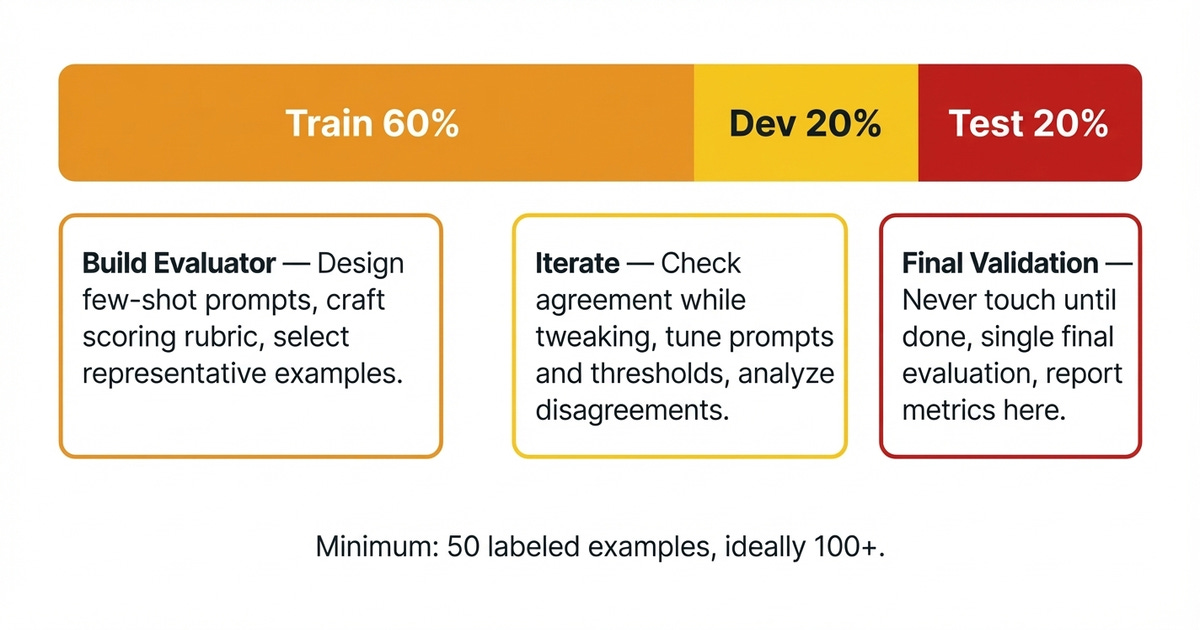

Now you need to partition that labeled data correctly. Why? Because you cannot build and validate on the same examples, as that is like grading your own exam. You must calculate the error on unseen data only to make sure you are not getting biased results, so split your dataset into three sets: train, dev and test [2].

The train set takes 60% of the data, representing the examples your evaluator learns from. They go into the few-shot prompt, inform the rubric, and set the standard for what Pass and Fail look like. The dev set takes 20% of the data, acting as your iteration sandbox. Run the judge here, check where it disagrees with the expert, adjust the prompt, and repeat to refine the system. Finally, the test set takes the remaining 20% and must be kept locked until you are done iterating. You use it only at the end when the LLM judge is aligned with the expert on the dev set. This gives you an unbiased final score on data that the evaluator has never seen.

The 60/20/20 split is a good starting point, but as your data grows and you don’t want to overload your few-shot-examples (they grow your context window), you can start moving more data to the dev and test splits.

In practice, 100 labeled examples mean 60 powering the prompt, 20 for tuning, and 20 for the final honest check. Aim for at least 100 labeled examples to get stable metrics. Below 50, your numbers become too noisy to act on. Watch out for class imbalance. If 90% of your traces are Pass and only 10% are Fail, you need a way to balance the classes, either by synthetically increasing your negative class or removing samples from your positive class, until a balance is achieved.

With data properly structured, let us quantify how well your judge actually agrees with the expert.

Measuring Alignment With Human Judgment

Your judge outputs Pass or Fail for each trace, which means you are building a binary classifier. You are using LLMs instead of other models, but ultimately, it’s still just a classifier.

Thus, you need to quantify the performance of the LLM Judge against the golden dataset we just split in the previous section. Standard classification metrics give you this visibility.

The confusion matrix shows four possible outcomes. True Positive (TP) means both judge and expert say Pass, agreeing the output is good. True Negative (TN) means both say Fail, agreeing the output is bad. False Positive (FP) means the judge says Pass, but the expert says Fail, letting a bad output through. False Negative (FN) means the judge says Fail, but the expert says Pass, meaning the judge was overly harsh.

Combining TP, TN, FP, and FN yields three fundamental metrics:

Accuracy is the overall agreement rate, calculated as

(TP + TN) / total. If the judge matches the expert on 170 out of 200 traces, that is 85% accuracy. This is useful when Pass and Fail are roughly balanced, but it is highly misleading when they are not.Precision measures how trustworthy the Pass verdicts are, representing the fraction of judge-approved traces that the expert also labeled Pass. You calculate it as

TP / (TP + FP). If the judge approves 50 articles and the expert disagrees on 8, precision is42 / 50 = 84%, meaning when the judge says the output is good, you can generally believe it.Recall measures how many actual Passes the judge finds out of all the traces the expert labeled Pass. You calculate it as

TP / (TP + FN). If 60 articles are genuinely good but the judge only catches 48, recall is48 / 60 = 80%, meaning the judge finds most quality output but still misses some.

Ultimately, we have the F1 score as an aggregate metric that provides a balanced view as the harmonic mean of precision and recall, calculated as 2 × (Precision × Recall) / (Precision + Recall). Use this when both false positives and false negatives matter equally. The right F1 target depends on the metric. With Brown, we accepted around 60% for subjective metrics like style, but demanded over 90% for objective ones like article structure. As a general rule, aim for an F1 above 0.70.

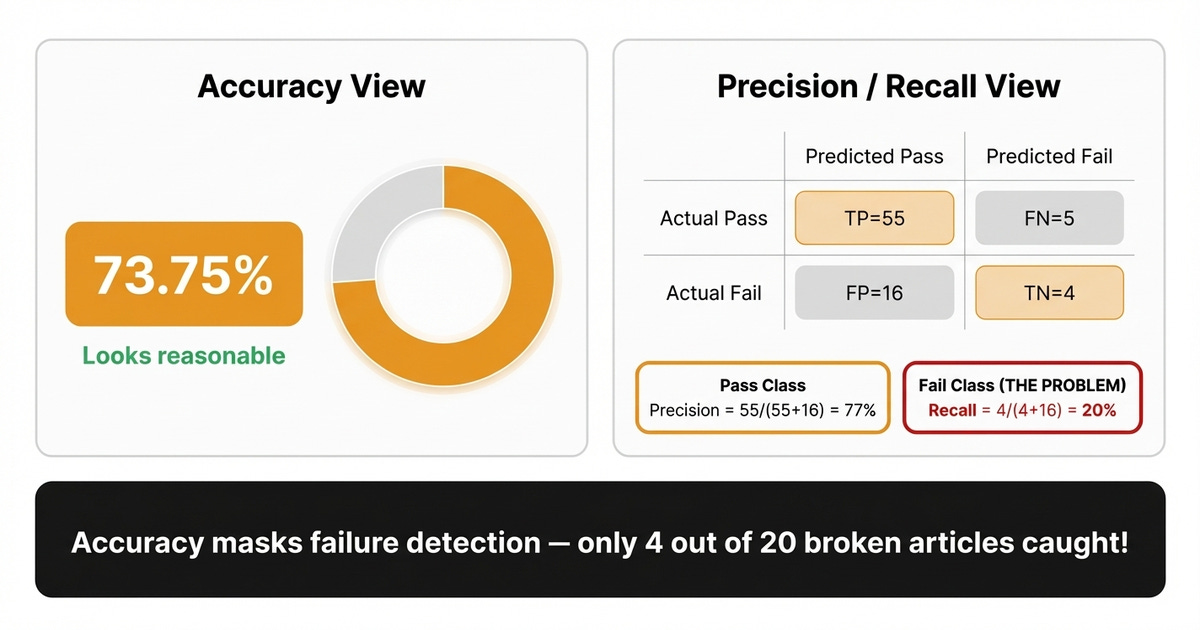

These metrics seem simple enough. But there is a common trap most teams fall into when their datasets are not balanced.

When High Scores Hide Real Failures

We can best understand this phenomenon by looking at a few examples.

For example, let’s assume Brown generates 80 articles. 70 are correct, and 10 are broken. Your judge labels every single one as Pass. Accuracy sits at 70 / 80 = 87.5%, which looks reasonable, but it never caught a single failure, making it completely useless.

Let us look at another example in more depth. Out of 80 generated articles, 60 are genuinely well-structured, while 20 have real problems like missing sections or disconnected paragraphs. The judge correctly approves 55 of the good ones and wrongly rejects 5. Of the 20 broken articles, it catches only 4 and lets 16 slip through. That gives us TP=55, FN=5, FP=16, TN=4.

Overall accuracy reads (55 + 4) / 80 = 73.75%, which looks reasonable. But Fail-class recall is just TN / (FP + TN) = 4 / (16 + 4) = 20%, meaning the judge misses 80% of structural failures. The lesson here is to always check precision and recall on the minority class. If those numbers are low, enrich your few-shot prompts with more failure examples, focusing particularly on the subtle cases where individual paragraphs look fine but do not connect fluidly [2].

Now that you know what to measure and what to watch out for, let us walk through the process of systematically improving your judge.

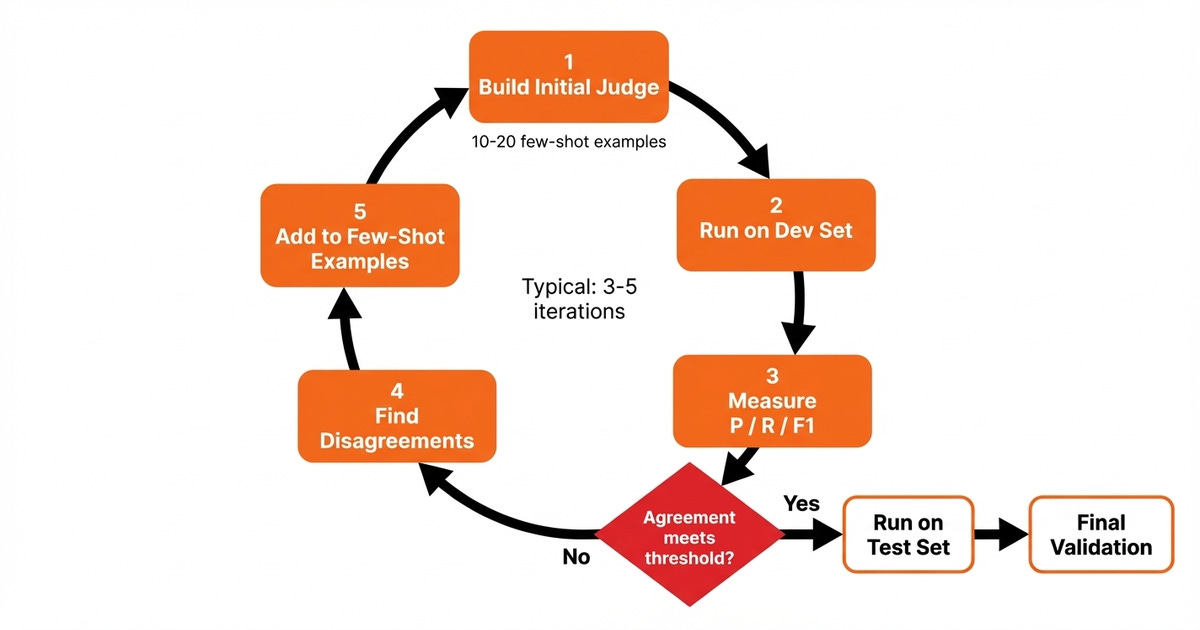

Closing the Gap Between Judge and Expert

This is the core workflow for making your judge reliable. Start with 10-20 few-shot examples from the train set to build your initial judge, and run it against the dev set while leaving the test set untouched. Compute precision, recall, and F1, then identify every disagreement where the judge and expert diverge. Expand your few-shot examples by incorporating those disagreements into the prompt when they reveal real patterns, re-run, and re-measure until the dev set alignment hits your target threshold.

Remember that your few-shot examples translate to input tokens, which translate to extra costs. Thus, ideally, you want to keep your few-shot examples as minimal, yet diverse, as possible, while maximizing performance on your dev and test splits.

Lock the test set for the final check. Only run the judge on the test set after you stop iterating on the dev set, giving you an uncontaminated measurement of real performance.

Expect at least 3 rounds of iteration. If you are still far below target after 10 iterations, the task may require human judgment that no prompt can replicate. Start by hand, as manual prompt refinement teaches you where your judge’s reasoning diverges from the expert’s. Carefully studying each disagreement is the most informative signal you have, and once your labeled dataset is large and high-quality enough, you can explore automated prompt optimization tools.

Read the LLM Judge critiques instead of just looking at metrics, as critiques tell you whether the judge was wrong or the expert missed something. As highlighted by Anthropic, you shouldn’t take eval scores at face value until someone digs into the details and reads the critiques of the judge [3].

Once your judge passes validation, put it to work for regression testing, optimization, and production monitoring as explained in Article 1.

What if the agreement stays low? If after 10 rounds your agreement is still low, here is what to look out for. Your few-shot examples might be too narrow, so as you keep sampling more production traces using your observability platform (e.g., Opik) revisit error analysis, as exlained in Article 2, to find the specific patterns where the judge fails and add those to the few-shot-examples. With Brown, our initial examples were too uniform, and adding subtle structural failures immediately improved alignment.

The rubric might lack specificity, as asking if the article is well-written invites interpretation, while asking if it contains well-defined paragraphs, transitions, and metaphors leaves less room for ambiguity. Sharpen the criteria.

Also, in case the task itself is too subjective, consider accepting a lower F1 score. For example, with Brown, style adherence was inherently subjective, so we accepted a lower F1 there while holding structure to >90%. The idea is to adapt your acceptance threshold based on the nature of each business metric you are tracking.

Even with strong agreement, there is one more challenge. Both your judge and your agent introduce randomness into every run. Let’s see how we can fix that.

Dealing With Non-Determinism

Randomness comes from two directions: as the judge produces different scores on the same input, and the agent itself takes different paths each run. You need to address both to build a stable evaluation pipeline.

The easiest and most powerful way to win is to scale the dataset, as larger datasets smooth out noise. Aim for enough examples in each class that a few misclassifications do not swing your metrics wildly. A good starting point is a minimum of 50 samples per class.

Also, another easy win (but not necessarily cheap) is to pick the strongest available model, using a frontier model like the latest versions of Claude Opus or Gemini Pro, because the judge should be at least as capable as the system it evaluates [2]. Require reasoning before the verdict by structuring the prompt with Chain of Thought (CoT) so the judge walks through each criterion first before delivering Pass or Fail. This step-by-step analysis produces more consistent scores and better alignment with human judgment [1].

Let the judge abstain by giving it an “Unknown” option when it lacks enough information to decide, because forcing a binary Pass/Fail on ambiguous cases generates false positives you cannot distinguish from real ones [3].

To further stabilize the judge, you can compute a significance threshold by running the evaluation 3-5 times and computing the variance between the runs. With Brown, this was essential because writing is subjective, and running the evaluator 5 times told us the real error threshold. A 3% metric shift across runs was noise, but 10% meant something actually changed. Without this, you are chasing random fluctuations.

On the agent side, treat it as a black box and evaluate the destination, not the route, as agents can reach the same outcome through different strategies. Brown might outline first, then write or draft everything, then restructure, but both can produce a strong article. Score the final output against your quality criteria, not the intermediate steps [3].

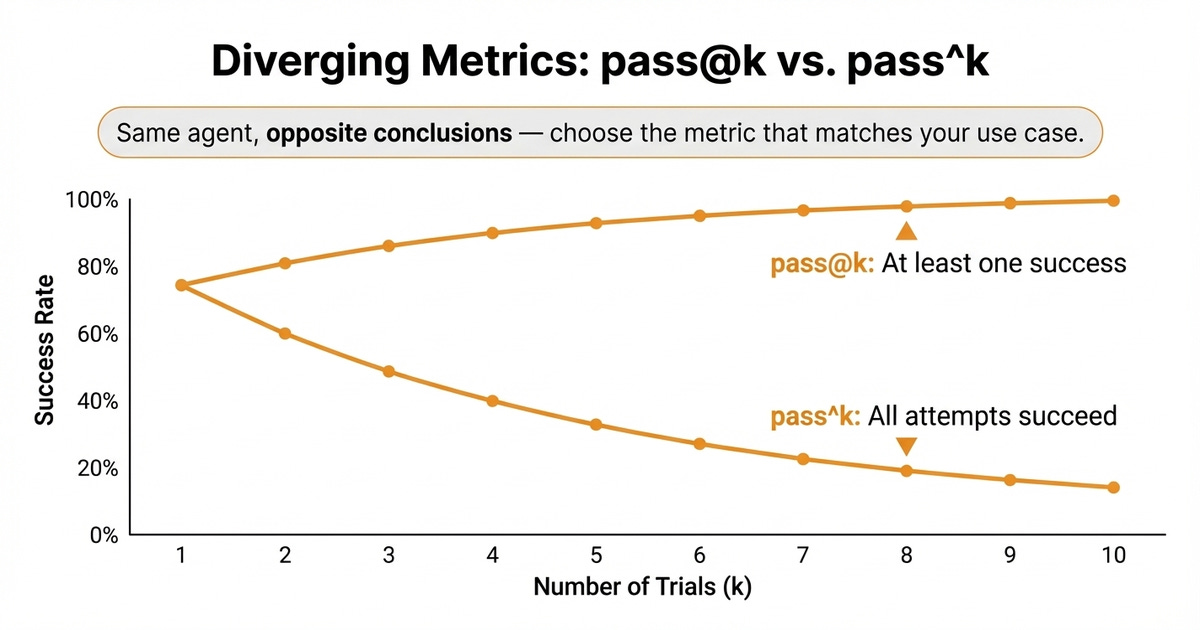

For the agent, measure reliability across multiple runs using pass@k and pass^k. pass@k tracks whether at least one out of k attempts succeeds, while pass^k tracks whether all k attempts succeed. These two metrics tell opposite stories as k grows: pass@k climbs toward 100% while pass^k dropping sharply, revealing how consistent your agent really is. [3].

You now have the complete toolkit for evaluating your evaluator.

Demo

To fully grasp the end-to-end workflow for building AI Evals, I recommend rewatching our demo using AlignEval, an open-source tool created by Eugene Yan. It provides a streamlined interface for the exact workflow this article teaches: look at your data, label it, evaluate outputs, and optimize your evaluators:

The tool is open source and available at aligneval.com, with the source code on GitHub (eugeneyan/align-app). You can try it for free with your own data or use the prompt below to quickly generate a CSV similar to the one from the demo:

I want you to generate a CSV file with the following characteristics:

"""

* The CSV file must include the following columns:

* id: Unique identifier for each row

* input: Context used to generate output

* output: Generated text to be evaluated

* label: Ground truth (values optional but counts towards XP)

* explanation: A one-sentence explanation on why we labeled the row as 0 (PASS) or 1 (FAIL)

* 🚨 The label column only accepts binary labels, either 0 or 1.

* 0: Output PASSES your evaluation

* 1: Output FAILS your evaluation

"""

that contains 100 rows

The goal of the CSV file is to implement a dataset to build an LLM Judge evaluator.

We want to create some mock, synthetic data to conceptually show how labeling, evaluating and optimizing the LLM judge would look like, based on this tool: https://aligneval.com/

Let's say that we collected data from a vertical assistant agent specialized in answering work emails and Slack messages. Thus, create 100 scenarios based on these dimensions:

* feature: email/slack

* scenario: executive, manager, colleague, spam email, phishing email, friend (as an exception)

* label: success/failure of properly answering the message

Where the input is a single email or Slack message or an email or Slack thread, but the output will ALWAYS be just the generated reply, whether it's email or Slack.

Make the labels a 50%/50% split between passes and fails.

Also, note that NO REPLY is an expected behavior for SPAM and phishing emails. Also, for non-essential emails or toxic or slack messages.We used Claude Opus 4.6 within the Claude app to generate it.

Next Steps

An evaluator only earns trust when it matches expert judgment. The workflow is straightforward: measure where your judge disagrees with the expert, fix those gaps, and confirm on data the judge has never seen. Only when the judge aligns with the expert on the test set can you rely on your eval metrics.

The error analysis workflow and iterative labeling were only the tip of the iceberg. Now you see the full picture of how to build, validate, and maintain evaluators.

Next up is a specialized article focused on evaluating Retrieval-Augmented Generation (RAG) systems.

Also, remember that this article is part of a 7-piece series on AI Evals & Observability. Here’s what’s ahead:

How to Evaluate the Evaluator ← You just finished this one

See you next Tuesday.

What’s your opinion? Do you agree, disagree, or is there something I missed?

Enjoyed the article? The most sincere compliment is to share our work.

Go Deeper

Go from zero to production-grade AI agents with the Agentic AI Engineering self-paced course. Built in partnership with Towards AI.

Across 34 lessons (articles, videos, and a lot of code), you’ll design, build, evaluate, and deploy production-grade AI agents end to end. By the final lesson, you’ll have built a multi-agent system and a capstone project where you apply everything you've learned on your own.

Three portfolio projects and a certificate to showcase in interviews. Plus a Discord community where you have direct access to other industry experts and me.

Rated 4.9/5 ⭐️ by 290+ early students — “Every AI Engineer needs a course like this.”

Not ready to commit? We also prepared a free 6-day email course to reveal the 6 critical mistakes that silently destroy agentic systems. Get the free email course.

Thanks again to Opik for sponsoring the series and keeping it free!

If you want to monitor, evaluate and optimize your AI workflows and agents:

References

Arize AI. (n.d.). LLM as a Judge: Primer and Pre-Built Evaluators. Arize. https://arize.com/llm-as-a-judge/

Husain, H. (n.d.). Using LLM-as-a-Judge for Evaluation. hamel.dev. https://hamel.dev/blog/posts/llm-judge/

Anthropic. (n.d.). Demystifying Evals for AI Agents. Anthropic Engineering Blog. https://www.anthropic.com/engineering/demystifying-evals-for-ai-agents

Images

If not otherwise stated, all images are created by the author.

![optimization_studio_walkthrough.mp4 [video-to-gif output image] optimization_studio_walkthrough.mp4 [video-to-gif output image]](https://substackcdn.com/image/fetch/$s_!Ecvh!,w_1456,c_limit,f_auto,q_auto:good,fl_lossy/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F88c8d01a-ea3a-4074-af28-c6a13d28f1d7_800x450.gif)

‘The workflow is straightforward: measure where your judge disagrees with the expert, fix those gaps, and confirm on data the judge has never seen.’

excellent takeaway here. i’ve been trying to see how llm judges work with security tools. they have some strengths but a strong jailbreak can hit both the product and the judge

Thanks for the step by step processing walkthrough for the good 😊